16.4 Alternative Decision Models

In the previous two sections, we discussed decision models as the competitive interaction between two or more populations of excitatory neurons. In this section we present two different models of decision making, i.e., the energy picture in subsection 16.4.1 and the drift-diffusion model in subsection 16.4.2. Both models are phenomenological concepts to describe decision making. However, both models are also related to the phase diagram of the model of two neuronal populations, encountered in Section 16.3.

| A | B |

|---|---|

|

|

16.4.1 The energy picture

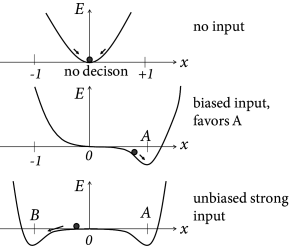

Binary decisions can be visualized as a ball in a hilly energy landscape. Once the ball rolls in a certain direction, a decision starts to form. The decision is finalized when the ball approaches the energy minimum; cf. Fig. 16.9A. The decision variable reflects a decision for option A, if it approaches a fixed point in the neighborhood of and a decision for option B for . If the variable is trapped in a minimum close to , no decision is taken.

The dynamics of the decision process can be formulated as gradient descent

| (16.11) |

with a positive constant . In other words, in a short time step , the decision variable moves by an amount . Thus, if the slope is positive, the movement is toward the left. As a result, the movement is always downhill, so that the energy decreases along the trajectory . We can calculate the change of the energy along the trajectory

| (16.12) |

Therefore the energy plays the role of a Liapunov function of the system, i.e., a quantity that cannot increase along the trajectory of a dynamical system .

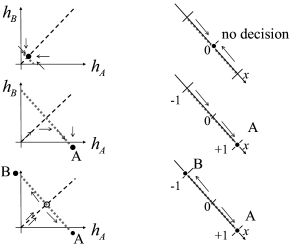

Interestingly, the energy picture can be related to the phase plane analysis of the two-dimensional model that we encountered earlier in Figs. 16.6B and 16.7. The diagonal of the phase plane plays the role of the boundary between the options A and B while the variable indicates the projection onto an axis orthogonal to the diagonal. is the unbiased, undecided position on the diagonal; cf. Fig. 16.9. In the case of strong unbiased input, the one-dimensional flow diagram of the variable presents a reasonable summary of the flow pattern in the two-dimensional system, because the saddle point in the phase plane is attractive along the diagonal and is reached rapidly while the flow in the perpendicular direction is much slower (543; 61; 558).

The above arguments regarding the Liapunov function of the network can be made more precise and formulated as general theorem (100; 227). We consider an arbitrary network of neuronal population with population rate where is a gain function with derivative and follows the dynamics

| (16.13) |

with fixed inputs . If the coupling is symmetric, i.e., then the energy

| (16.14) |

is a Liapunov function of the dynamics.

The proof follows by taking the derivative. We exploit the fact that and apply the chain rule so as to find

| (16.15) | |||||

In the second line we have used Eq. (16.13). Furthermore, since the neuronal gain function stays below a biologically sustainable firing rate , the energy is bounded from below. Therefore the flow of a symmetric network of interacting populations will always converge to one of the stable fixed points corresponding to an energy minimum, – unless the initial condition is chosen to lie on an unstable fixed point in which case the dynamics stays there until it is perturbed by some input.

Example: Binary decision network revisited

The binary decision network of Eqs. (16.7) and (16.8) with effective inhibition and recurrent interactions consists of two populations. Interactions are symmetric since . Therefore the energy function

| (16.16) |

is a Liapunov function of the dynamics defined in Eqs. (16.7) and (16.8). Since the dynamics is bounded, there must be stable fixed points.

16.4.2 Drift-Diffusion Model

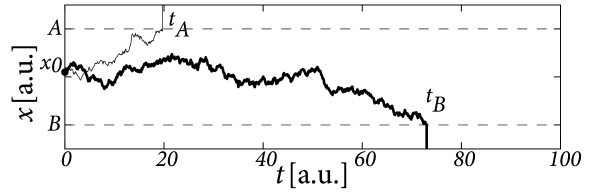

The drift-diffusion model is a phenomenological model to describe choice preferences and distributions of reaction times in binary decision making tasks (422). At each trial of a decision experiment, a decision variable is initialized at time at a value . Thereafter, the decision variable evolves according to

| (16.17) |

where is Gaussian white noise of unit mean and variance . An input causes a ‘drift’ of the variable toward positive values while the noise leads to a ‘diffusion’-like motion of the trajectory; hence the name ‘drift-diffusion model’.

The reaction time is the time at which the variable reaches one of two thresholds, or , respectively (Fig. 16.10). For example defined by is the reaction time in a trial where the choice falls on option .

Parameters of the phenomenological drift-diffusion model are the values of thresholds and , and the strength of the input compared to that of the noise. The initial condition can be identical in all trials or chosen in each trial independently from a small interval that reflects uncontrolled variations in the bias of the subject. The time is typically the moment when the subject receives the choice stimulus, but it is also possible to start the drift-diffusion process a few milliseconds later so as to account for the propagation delay from the sensors to the brain (422; 421).

Example: Drift-diffusion model versus neuronal models

In the original formulation, the drift-diffusion model was used as a ‘black box’, i.e., a phenomenological model with parameters that can be fitted to match the distribution of reaction times and choice preferences to behavioral experiments. Interestingly, however, variants of one-dimensional drift-diffusion models can be derived from the models of neural populations with competitive interaction that we have discussed in earlier sections of this chapter (61; 558; 447). The essential idea can be best explained in the energy picture; cf. Fig. 16.9. We assume a small amount of noise. In the absence of input, the decision variable jitters around the stable fixed point . Its momentary value serves as an initial condition, once the input is switched on. Suppose the input is strong but unbiased. Two new valleys form around . However, in the neighborhood of the landscape is flat, so that noise leads to a diffusive motion of the trajectory. A biased input tilts the energy landscape to the right which causes a corresponding drift term in the diffusion process. The location where the slope of the valley becomes steep can be associated with the threshold in the diffusion model.